Heuristics for systems

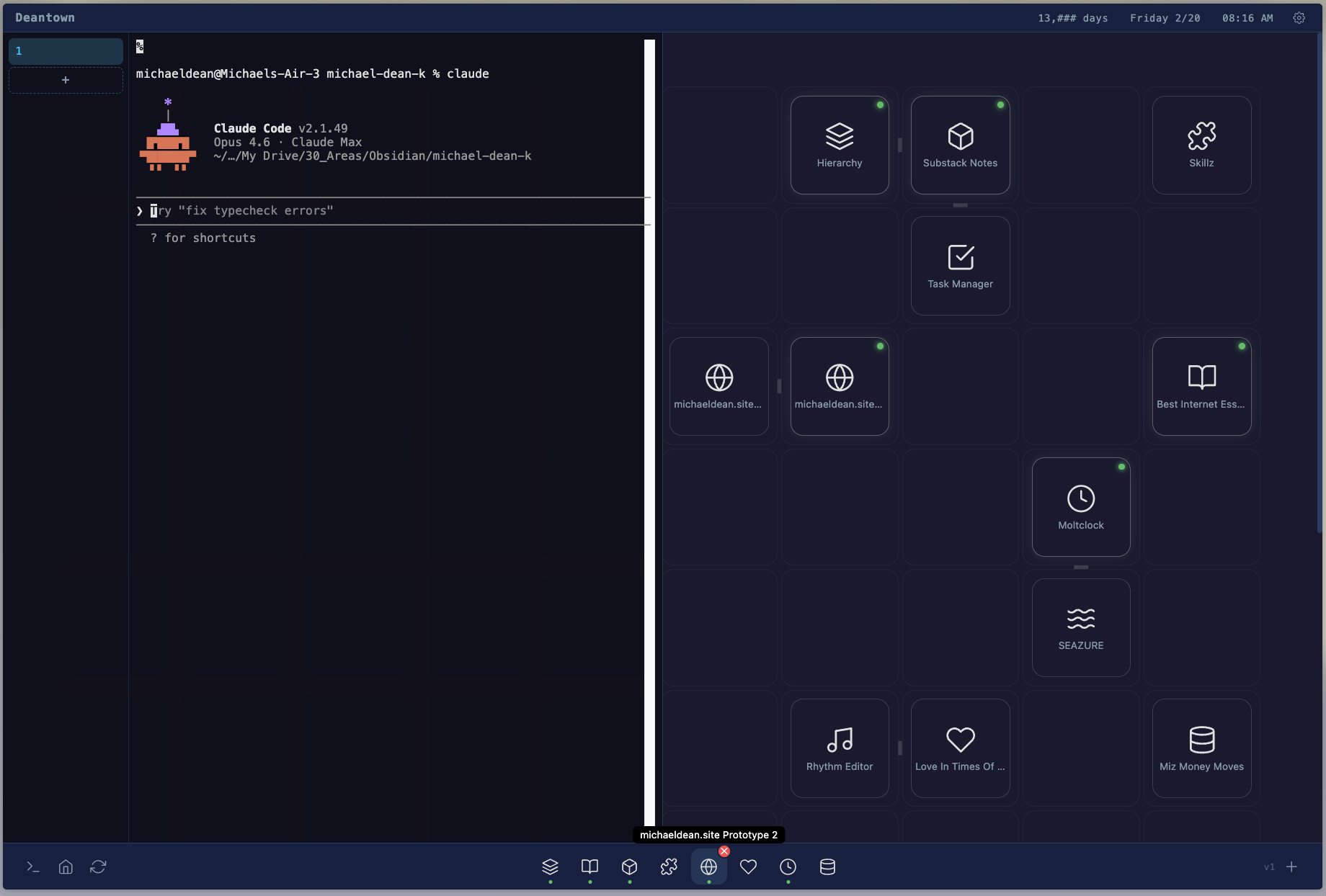

I declared to my wife this morning that DeantownOS is getting retired. It’s been 3 months since I spiraled into Claude Code for personal systems, and I’m at the point in the curve where the amazement has normalized and I’ve accepted the fact that I’m in a trough of disillusionment. The question now is revise or abort.

The case for aborting ties back to Oliver Burkemann’s Four Thousand Weeks, which popularized the idea that all systems are methods to procrastinate from making hard decisions. They give the illusion that you can do everything, and since AI can meaningfully leverage the volume and range of things you can do, it tempts you to build galaxy-brained systems. The thing I think we fail to realize while in a vibe coding frenzy is the psychic cost to remember and maintain the stuff you build. Yes, it is appealing to “reclaim my computer” and rebuild everything I use as personal software (from Obsidian to Gmail), and it’s even possible, but it’s a new breed of Sisyphean struggle. Once you can mold your own software around you, it’s too easy to endlessly mold, to lose sight of the work and just tinker on your exoskeleton.

I’m obviously skeptical, but I’m still a believer; if I were to revise, to rebuild my Claude stack from scratch, I would have to develop a few heuristics to help me from short-circuiting.

The first one that comes to mind is “will this matter once I’m dead?” Ie: writing an essay matters, because I imagine one day my daughter will read that and get to know me better, or at the very least, future Me in 35 years may enjoy reading words of my past self. But to create detailed daily files that get spliced into atomic “routing files” that then then get saved again to a new destination folder, which exist either as (a) just context for AI, or (b) require some manual effort to prune into something that matters once I’m dead, is to create waaaay too many layers of abstraction between the source and the Work. When I read back my writing from the last few months, only a small is valuable enough to be saved as "logs" in my archive. I was writing for AI, not for my future self.

I made this assumption that atomic daily files are the kernel of a system, and it was an axiom I could never undo. There’s maybe another principle on “don’t build load-bearing infrastructure on an unproven axiom.”

Another one could be “don’t assume future you will have bandwidth,” to do X every day/week/month. Every day I had to review how my AI system proposed to route my logs, and eventually I'd ignore it and get backed up. This means that if something isn’t truly automated, I should be very cautious of it. It's possible to do one little step forever, but not a hundred. Not every promise has brush-your-teeth-scale reliability.

What I’m getting at is that it’s not about maximizing or neglecting systems, but about understanding the right principles so you build something that is actually in service of your life.